Over the past four years we’ve been involved in designing and evaluating both Coventry UK City of Culture 2021 (UK CoC 2021) and Birmingham 2022 Festival, part of the Birmingham 2022 Commonwealth Games.

But why evaluate? What benefits does it bring? Apart from the obvious argument of ensuring value for (usually taxpayers’) money, these are the main benefits we can identify.

Quantifying the impacts of a large single investment in culture in a specific place over a limited timescale with the questions:

- What has the investment enabled that would not have happened without it?

- What has the intervention achieved that sustained investment in ongoing cultural organisations has not / cannot?

Capturing and reporting on how different people have experienced the event differently and what their stories tell us.

Our Learnings: Five Keys to Successful Evaluation

With our experience of collecting and collating evaluation data for both of these mega events, we’ve learned the keys to successful evaluation:

Having a Sound Theory of Change Model from the Outset

Both Coventry 2021 and Birmingham 2022 had a well-developed Theory of Change model available to the evaluation team on the first day which guided programming and investment decisions. It needed nuancing as the programmes emerged of course as, in both cases, it was written before any commissions were secured, or programming boots hit the ground.

Despite the many adaptations to programme the pandemic forced on Coventry, the originally stated outcomes and impacts for change provided a focus for the necessary changes that had to be made in the cultural responses and programming.

Developing a Robust Evaluation Framework

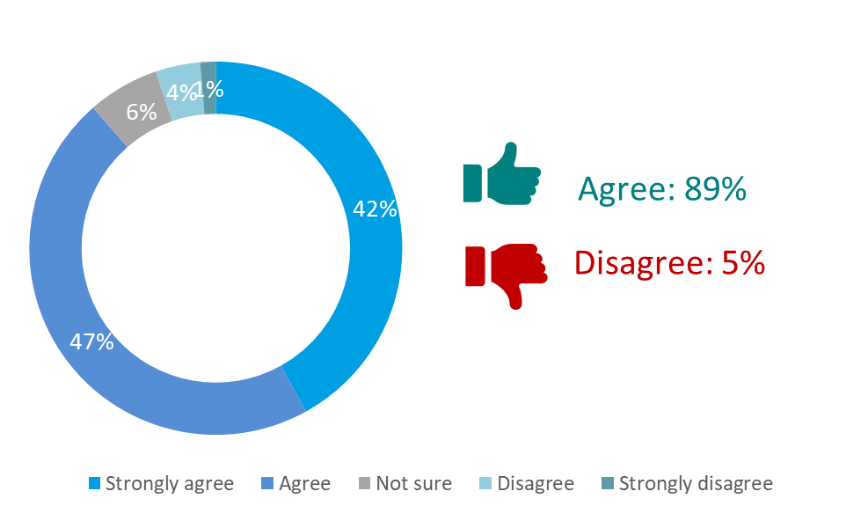

A successful Evaluation Framework takes each of the outcomes named in the Theory of Change and identifies what data is needed to be collected to evidence each outcome. Data can be quantitative or qualitative and needs gathering across multiple different platforms or touchpoints.

A good framework should also identify where ‘baseline’ metrics will be found. For example, if the outcome is to increase or improve something from x to y, the starting point needs to be explicit. What data is currently available to tell you this? And if it doesn’t exist, how might you find out?

The framework should also identity where numbers alone are not going to provide suitable evidence. For both projects, case study and other qualitative work has been crucial to understanding the depth and scale of delivery and impact.

Designing Innovative Data Collection Tools and Practices

The way you run an event will have a significant impact on your ability to collect data about attenders. Birmingham 2022 Festival decided that the whole programme would be free and unticketed. So we knew we had to count people and gather feedback data ‘on the ground’ rather than rely on sales data reports and emailing surveys after the event.

With more than 160 projects over 6 months, we had to develop a distributed model of evaluation, with every project engaging with the evaluation – collecting numbers of attenders or participants and gathering feedback. The effort required to achieve this across the Festival was substantial and it was impressive that every project managed to do it.

We provided standard data collection tools and worked with individual projects as necessary to adapt the tools for particular activities or audiences. For example, for a project involving adults with learning disabilities, it was essential their voices were included in the evaluation but our methods of collecting them needed to be handled differently.

Having a Central Point of Truth

Both Coventry 2021 and Birmingham 2022 had an evaluation co-ordinator as an integral part of the delivery team. Their role was to advocate for evaluation within the organisation, ensuring that due consideration was given at planning and delivery stages to gathering the required evidence.

They also had to ensure data was collected and delivered on time; to quality assure the data as it came in, checking and validating the figures supplied by each project; and manage the subcontractors / suppliers to make sure everyone was on track.

All the data collected was fed into a central point which became the ‘single point of truth’ used to develop the evaluation statistics. The data could then be sliced and diced in various ways, but always add up.

Having this single data repository, with only validated data being entered, we were happy to supply that data back out to other evaluators working on specific Festival projects. We could also create a Festival-wide dashboard that could be updated monthly throughout the project. It is now being used to create bespoke Funder reporting, as well as the final evaluation reports for the Festival.

Knitting Together the What and the Why

As those involved in data analysis are only too aware, numbers can tell many stories so the challenge in undertaking evaluation is to decide when a story is best told in numbers and when it needs a different approach.

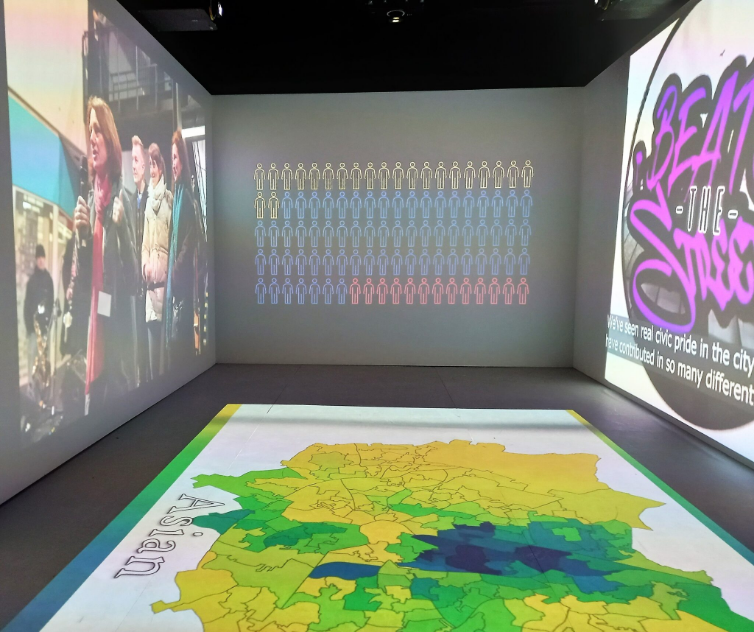

In Coventry and Birmingham, qualitative case studies were a crucial component of the evaluation. These included the observation of projects in development, delivery and close-down phases; interviewing artists and producers; and testing with audiences/participants the extent to which the creative intent was delivered.

Being able to tell the story of how investment in culture and creativity can radically change places and people for the future is essential to the future growth of the sector. But we all need to play a part in unearthing, gathering, illuminating and sharing the evidence we have to make that story the richest and most compelling it can be.

Acknowledgements

This article was written by Katy Raines – Chief Executive Officer of Indigo Cultural Consulting Ltd – and Professor Jonothan Neelands – Academic Director Research and Evaluation at Warwick Business School.

It was originally published in Arts Professional magazine.